Signal processing analysis is a fundamental discipline in engineering and data science that involves the application of mathematical and computational techniques to analyze, modify, and extract information from signals. A signal is defined as any measurable quantity that varies over one or more independent variables, typically time or space. These signals are present across numerous domains including telecommunications, audio engineering, radar systems, medical diagnostics, and control systems.

The core purpose of signal processing is to transform raw signal data into useful information that can support decision-making processes and optimize system performance. This transformation involves various operations such as filtering, amplification, modulation, demodulation, and feature extraction. With the rapid advancement of digital technology and the proliferation of sensors and data acquisition systems, the volume and complexity of signal data have grown substantially, necessitating more sophisticated processing methods.

Signal processing analysis has practical applications that directly impact system functionality and reliability. In telecommunications, it enables clear voice transmission and high-speed data communication. In medical technology, it facilitates accurate diagnosis through processed imaging and physiological monitoring.

Audio systems rely on signal processing for noise reduction and sound enhancement. The increasing adoption of Internet of Things (IoT) devices, autonomous systems, and artificial intelligence has further expanded the demand for advanced signal processing capabilities. Current research focuses on addressing computational complexity, real-time processing requirements, noise mitigation, and the development of adaptive algorithms that can handle diverse signal characteristics across different applications.

Key Takeaways

- Signal processing analysis is crucial for accurate data interpretation and decision-making.

- Overcoming challenges in signal processing requires advanced techniques and algorithms.

- Machine learning and statistical methods significantly enhance signal processing capabilities.

- Real-time data processing and effective visualization improve analysis outcomes.

- Emerging trends focus on innovation and integration for more efficient signal processing solutions.

Importance of Data Interpretation in Signal Processing

Data interpretation is a fundamental aspect of signal processing analysis, as it transforms raw data into actionable insights. The ability to accurately interpret signals can lead to significant advancements in various fields, including telecommunications, where clear signal transmission is essential for effective communication. In medical diagnostics, precise interpretation of signals can aid in early disease detection and treatment planning.

Thus, the importance of data interpretation cannot be overstated; it is the bridge that connects raw data to meaningful conclusions. Moreover, effective data interpretation allows for the identification of patterns and trends within signals that may not be immediately apparent. By employing various analytical techniques, professionals can uncover hidden relationships within the data that can inform strategic decisions.

For instance, in financial markets, analyzing trading signals can provide insights into market trends and investor behavior. As such, the role of data interpretation in signal processing analysis is not only about understanding what the data represents but also about leveraging that understanding to drive innovation and improve outcomes across diverse sectors.

Common Challenges in Signal Processing Analysis

Despite its importance, signal processing analysis is fraught with challenges that can hinder effective data interpretation. One of the most prevalent issues is noise interference, which can obscure the underlying signal and lead to inaccurate conclusions. Noise can originate from various sources, including environmental factors or inherent limitations in measurement devices.

As a result, analysts must develop robust filtering techniques to isolate the true signal from unwanted noise, a task that often requires significant expertise and resources. Another challenge lies in the sheer volume and complexity of data generated in modern applications. With the advent of big data, analysts are often inundated with vast amounts of information that must be processed and interpreted quickly.

This overwhelming influx can lead to information overload, making it difficult for analysts to discern relevant insights from irrelevant data points. Additionally, the dynamic nature of many signals means that they can change rapidly over time, necessitating real-time analysis capabilities that many traditional methods may not support.

Techniques for Enhancing Signal Processing Analysis

To address the challenges inherent in signal processing analysis, various techniques have been developed to enhance the accuracy and efficiency of data interpretation. One such technique is adaptive filtering, which allows for real-time adjustments to filter parameters based on changing signal characteristics. This approach enables analysts to maintain signal integrity even in the presence of fluctuating noise levels, thereby improving overall analysis outcomes.

Another effective technique is wavelet transform, which provides a multi-resolution analysis of signals. Unlike traditional Fourier transforms that decompose signals into sine and cosine components, wavelet transforms allow for localized analysis in both time and frequency domains. This capability is particularly beneficial for analyzing non-stationary signals that exhibit varying frequency components over time.

By employing these advanced techniques, analysts can significantly enhance their ability to interpret complex signals accurately.

Utilizing Advanced Algorithms for Improved Data Interpretation

| Metric | Description | Typical Value/Range | Unit |

|---|---|---|---|

| Signal-to-Noise Ratio (SNR) | Ratio of signal power to noise power | 20 – 60 | dB |

| Sampling Frequency | Number of samples per second | 8,000 – 192,000 | Hz |

| Bit Depth | Number of bits per sample | 8 – 32 | bits |

| Frequency Resolution | Smallest distinguishable frequency difference | 0.1 – 10 | Hz |

| Dynamic Range | Range between smallest and largest signal amplitude | 60 – 120 | dB |

| Latency | Delay introduced by processing | 1 – 50 | ms |

| Filter Order | Number of coefficients in filter design | 1 – 100 | unitless |

| Mean Squared Error (MSE) | Average squared difference between estimated and true signal | 0 – 0.1 | unitless |

The integration of advanced algorithms into signal processing analysis has revolutionized the field by providing more sophisticated tools for data interpretation. Machine learning algorithms, for instance, have gained prominence due to their ability to learn from data patterns and make predictions based on historical information. These algorithms can be trained on large datasets to identify trends and anomalies that may not be easily detectable through traditional analytical methods.

Additionally, algorithms such as support vector machines (SVM) and neural networks have shown great promise in classifying and predicting outcomes based on signal data. By leveraging these advanced algorithms, analysts can improve their decision-making processes and enhance the accuracy of their interpretations. The continuous evolution of algorithmic techniques ensures that signal processing analysis remains at the forefront of technological advancements.

The Role of Machine Learning in Signal Processing Analysis

Machine learning has emerged as a transformative force in signal processing analysis, offering new avenues for extracting insights from complex datasets.

This automation not only increases efficiency but also reduces the potential for human error in analysis.

Furthermore, machine learning models can adapt to new data inputs over time, allowing for continuous improvement in their predictive capabilities. For example, in medical imaging, machine learning algorithms can be trained on vast datasets of images to identify subtle patterns indicative of specific conditions. As these models are exposed to more data, their accuracy improves, leading to better diagnostic outcomes.

The integration of machine learning into signal processing analysis represents a significant leap forward in the quest for more effective data interpretation.

Integrating Signal Processing Analysis with Statistical Methods

The integration of statistical methods into signal processing analysis enhances the robustness of data interpretation by providing a framework for quantifying uncertainty and variability within signals. Statistical techniques such as regression analysis and hypothesis testing allow analysts to draw meaningful conclusions from their data while accounting for potential errors or biases. Moreover, statistical methods can aid in model validation by providing metrics that assess the performance of predictive models developed through signal processing techniques.

For instance, using statistical measures like precision and recall can help determine how well a model performs in classifying signals accurately. By combining statistical methods with signal processing analysis, analysts can achieve a more comprehensive understanding of their data and make informed decisions based on solid evidence.

Enhancing Signal Processing Analysis with Real-time Data Processing

The ability to process data in real-time has become increasingly important in signal processing analysis as industries demand quicker insights and responses. Real-time data processing allows analysts to monitor signals continuously and make immediate adjustments based on changing conditions. This capability is particularly crucial in applications such as telecommunications and finance, where timely decision-making can significantly impact outcomes.

To facilitate real-time processing, various technologies have been developed that enable rapid data acquisition and analysis. Stream processing frameworks allow for the continuous flow of data through analytical pipelines, ensuring that insights are generated as soon as new information becomes available. By enhancing signal processing analysis with real-time capabilities, organizations can respond proactively to emerging trends and challenges.

Best Practices for Data Visualization in Signal Processing Analysis

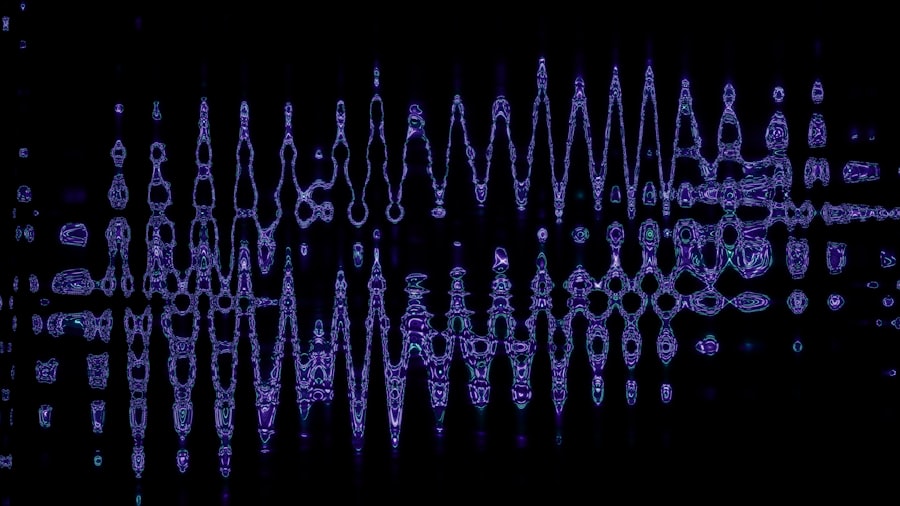

Effective data visualization is essential for communicating insights derived from signal processing analysis clearly and concisely. Best practices in this area emphasize the importance of selecting appropriate visualization techniques that align with the nature of the data being analyzed. For instance, time-series plots are particularly useful for displaying changes in signals over time, while scatter plots can effectively illustrate relationships between different variables.

Additionally, incorporating interactive elements into visualizations can enhance user engagement and facilitate deeper exploration of the data. Tools that allow users to manipulate visualizations or drill down into specific aspects of the data can lead to more meaningful interpretations. By adhering to best practices in data visualization, analysts can ensure that their findings are accessible and comprehensible to a broader audience.

Case Studies of Successful Signal Processing Analysis Implementation

Numerous case studies illustrate the successful implementation of signal processing analysis across various industries. In healthcare, for example, researchers have utilized advanced signal processing techniques to analyze electrocardiogram (ECG) signals for early detection of cardiac abnormalities. By applying machine learning algorithms to these signals, they were able to identify patterns indicative of potential heart issues with remarkable accuracy.

In telecommunications, companies have employed signal processing analysis to optimize network performance by analyzing call quality metrics and identifying sources of interference. By implementing adaptive filtering techniques and real-time monitoring systems, they have significantly improved user experience and reduced service disruptions. These case studies highlight the transformative potential of effective signal processing analysis when applied strategically within different contexts.

Future Trends and Innovations in Signal Processing Analysis

As technology continues to advance at an unprecedented pace, future trends in signal processing analysis are likely to be shaped by developments in artificial intelligence (AI), quantum computing, and edge computing.

Quantum computing holds promise for revolutionizing signal processing by providing unprecedented computational power for complex analyses.

This could lead to breakthroughs in areas such as real-time image processing or large-scale data interpretation where traditional computing methods may struggle. Additionally, edge computing will facilitate faster data processing by bringing computation closer to the source of data generation. This trend will be particularly beneficial for applications requiring immediate insights from sensor networks or IoT devices.

In conclusion, signal processing analysis remains a dynamic field with immense potential for innovation and improvement. By embracing advanced techniques and technologies while addressing existing challenges, analysts can continue to unlock valuable insights from complex signals across diverse applications.

In the realm of signal processing analysis, a comprehensive understanding of various techniques is essential for effective data interpretation. A related article that delves into these methodologies can be found at XFile Findings, where it explores innovative approaches and applications in the field. This resource provides valuable insights for both beginners and seasoned professionals looking to enhance their knowledge and skills in signal processing.

WATCH THIS! 🛰️125 Years of Hidden Signals: What NASA Just Admitted About the Black Knight Satellite

FAQs

What is signal processing analysis?

Signal processing analysis involves the examination, interpretation, and manipulation of signals to extract useful information, improve signal quality, or prepare signals for further processing.

What types of signals are analyzed in signal processing?

Signals can be analog or digital and include audio, video, sensor data, communication signals, and biomedical signals, among others.

What are the main goals of signal processing analysis?

The primary goals include noise reduction, feature extraction, signal enhancement, data compression, and pattern recognition.

What are common techniques used in signal processing analysis?

Common techniques include Fourier transform, filtering, wavelet transform, time-frequency analysis, and statistical signal processing.

What is the difference between time-domain and frequency-domain analysis?

Time-domain analysis examines signals with respect to time, while frequency-domain analysis studies the signal’s frequency components, often using transforms like the Fourier transform.

What are some applications of signal processing analysis?

Applications include telecommunications, audio and speech processing, medical imaging, radar and sonar systems, and financial data analysis.

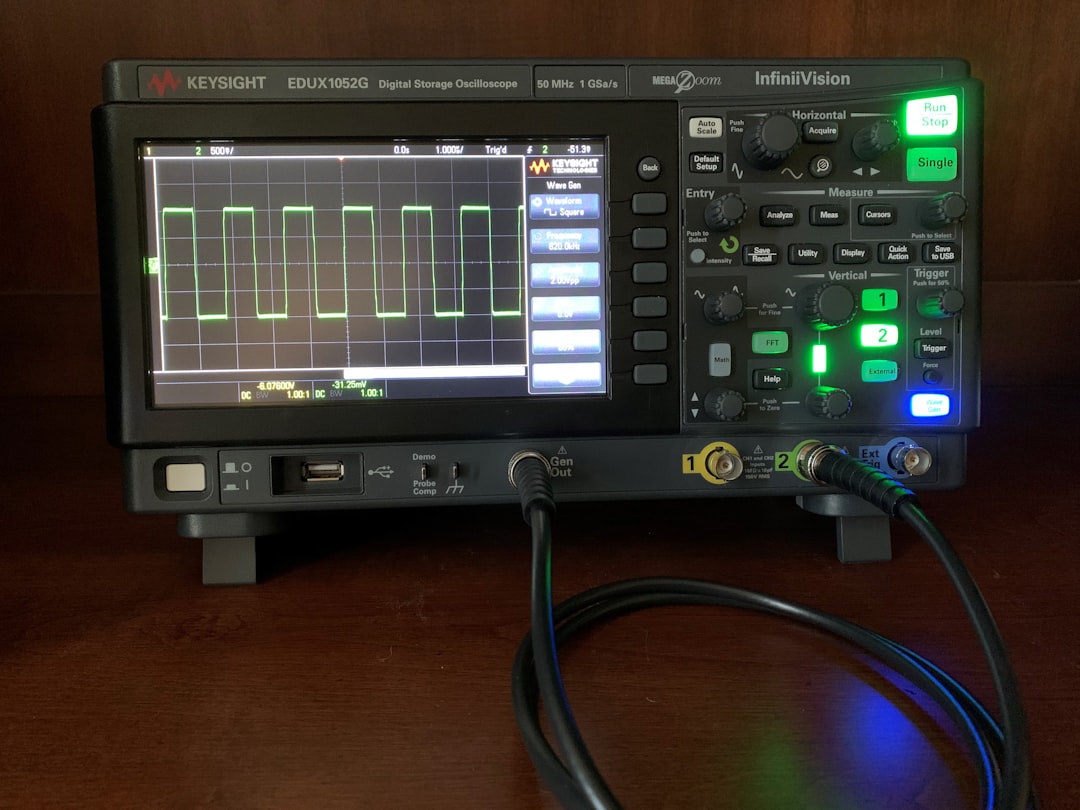

What tools are commonly used for signal processing analysis?

Popular tools include MATLAB, Python libraries (such as NumPy and SciPy), LabVIEW, and specialized hardware like digital signal processors (DSPs).

What is the role of filtering in signal processing analysis?

Filtering is used to remove unwanted components or noise from a signal, allowing for clearer interpretation and analysis.

How does digital signal processing differ from analog signal processing?

Digital signal processing involves discrete signals and uses algorithms for analysis, while analog signal processing deals with continuous signals using electronic circuits.

What skills are important for someone working in signal processing analysis?

Key skills include knowledge of mathematics (especially linear algebra and calculus), programming, understanding of signal theory, and experience with relevant software tools.